three projects. twelve weeks.

all shipped.

How I audited an entire enterprise web ecosystem, designed a demo discovery platform from zero to live, and innovated at a org-wide hackathon.

A $28 billion acquisition. Two disconnected ecosystems. One summer to help make sense of it.

In 2024, Cisco acquired Splunk for $28 billion. When I joined in summer 2025, Splunk was in the middle of a complex integration: preparing to consolidate into cisco.com while maintaining its distinct product ecosystem and brand. This created structural UX problems that individual product teams could not see from inside their silos.

My work touched all three layers: auditing the fragmented current state, improving how users discovered and navigated products, and designing AI-powered features that pushed the experience forward.

Eight domains inspected through Nielsen's 10 Heuristics

I combed through every page, component, and user flow across Splunk's web ecosystem. Every violation was documented by heuristic, rated by severity (critical, major, or minor), and accompanied by screenshots and specific recommendations. The goal was not just to find problems but to give sprint teams something they could immediately act on.

The audit also revealed something important: product teams working on individual domains had no visibility into the patterns across the whole ecosystem. Navigation inconsistencies, terminology fragmentation, and broken information flows only became visible when you looked at all eight domains together. That perspective was the unique value I added.

Built in Claude Code for stakeholder accessibility — click any stage or domain card to explore cross-domain pain points and recommendations.

Users could not find the demos. So they never used them.

Splunk had no centralized home for product demos. They were buried under Main Nav, then Resources, then filtered by "Product Tours." Three or more clicks to get to something most users never discovered existed. I analyzed Mouseflow session recordings and confirmed: nearly no one found the demos through organic navigation. The demos were effectively invisible.

My goal was to design a dedicated discovery platform that put demos front and center, and to get it live before the end of my internship.

"Users who found the demos had to navigate through three or more clicks, and most users never discovered they existed at all."

Mouseflow session analysis, June 2025A drop-off moment that needed to be addressed

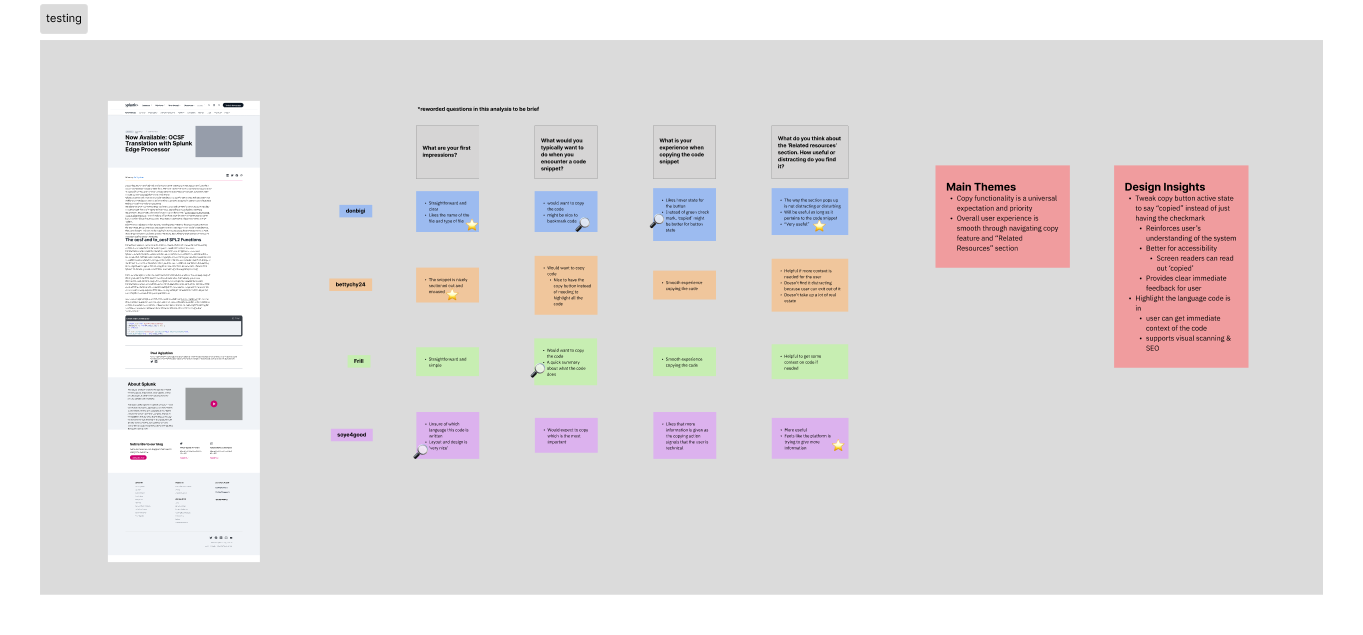

Through research, I identified that code snippets on Splunk's technical blogs were a high-intent drop-off point. I uncovered that a user reads a post, copies a snippet, leaves. While that moment of interaction signals interest, nothing was being done with it.

I entered our team's hackathon as the only UX intern in the room and started my process by doing competitor analysis, seeing where our experience was lacking, and understanding how AI can be integrated to match Cisco's mission of creating more personalized experiences.

The concept: a user copies a code snippet -> trigger contextual AI-powered resource recommendations through Cisco's LLM pipeline based on their interests and web activity.

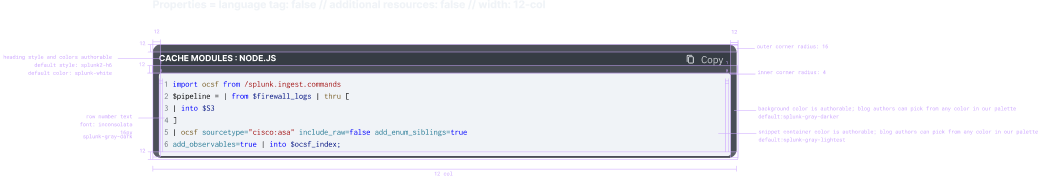

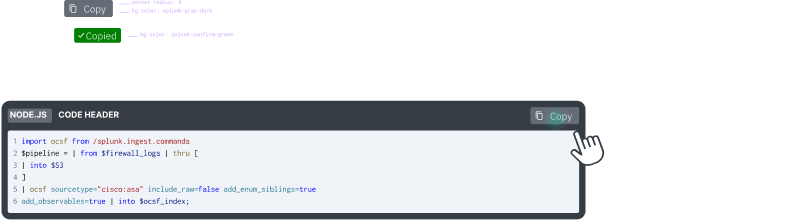

Once the concept was validated, I delivered full engineering-ready specs — not just pretty mockups. That meant spacing and sizing annotations, component states, responsive breakpoints for desktop, tablet, and mobile, interaction triggers, and color and typography tokens. The goal was that an engineer could pick this up without needing to ask me a single question.

Once the concept was validated, I delivered full engineering-ready specs. That meant spacing and sizing annotations, component states, responsive breakpoints for desktop, tablet, and mobile, interaction triggers, and color and typography tokens. The goal was that an engineer could pick this up without needing to ask me a single question.

I was figuring it out as I went. Here's what I got right, and what I'd do differently.

This was genuinely one of my first times designing an AI feature. I didn't come in with a formal AI design framework — I was learning on the fly. But I did make some intentional decisions around trust and transparency, and I've thought a lot since about what I'd add now.